The January/February 2026 updates to the Apprenticeship Accountability Framework (AAF) reshaped how apprenticeship provision is measured and categorised. The number of indicators has reduced, inspection outcomes are now more directly reflected, and the calculation of apprentices past their planned end date has been tightened. For providers, this brings three clear themes into sharper focus: closer alignment with inspection judgments, greater scrutiny of live learner progress, and an increased need for robust, real-time performance oversight.

In our webinar, Understanding the changes in the Apprenticeship Accountability Framework, compliance specialist David Lockhart-Hawkins explored what changed and how providers can respond. Alongside the regulatory updates, we also demonstrated how Aptem supports providers to monitor performance in practice, with reporting and dashboard visibility aligned to the AAF.

What has changed in the Apprenticeship Accountability Framework (AAF)?

The updated framework reduces the number of indicators from ten to seven and removes the previous distinction between ‘quality’ and ‘supplementary’ indicators. All remaining measures now sit together as core indicators.

Three key changes stand out.

1. Alignment with the new Ofsted framework

Because Ofsted inspections now use updated judgments, including ‘expected standard’, ‘needs attention’ and ‘urgent improvement’, the AAF has been revised to reflect these categories.

Under the revised framework, a provider will be:

- ‘On track’ if safeguarding is met and there are no ‘needs attention’ or ‘urgent improvement’ judgments at whole-provider or apprenticeship provision level.

- ‘Needs improvement’ if any ‘needs attention’ judgment is received in those areas.

- ‘At risk’ if safeguarding is not met or an ‘urgent improvement’ judgment is given.

This change makes inspection outcomes more visibly connected to AAF categorisation.

2. Removal of certain indicators

Off-the-job hours, breaks in learning and the EPAO field have been removed from the framework in their previous format. However, as discussed in the webinar, this does not mean they are no longer relevant. Breaks in learning, for example, may still be considered as contextual factors in discussions outside the headline indicators.

3. A major refinement to the ‘past planned end date’ measure

The most operationally significant update relates to apprentices past their planned end date.

The revised measure focuses specifically on apprentices who are:

- Still live,

- Not in gateway

- And past their planned end date

There is no longer a 90- or 180-day tolerance built into the headline metric. The proportion is calculated against the provider’s total ILR population for the year. If between 10% and less than 15% fall into this category, the provider moves into ‘needs improvement’; 15% or more would be ‘at risk’.

Correct ILR coding, particularly for learners in gateway, is essential to ensure the calculation reflects actual performance.

Please note: This area was updated by the department on 17th February after our recording to clarify how this field would be calculated as was not in line with the original specification. The clarification is that learners included would be those whose programme aim does not have an actual end date and the learning planned end date is at least one day before ‘the ILR freeze schedule’ the date of funding return (or column B here).

Therefore, inclusions will be:

- No actual end date (still in learning)

- Not in gateway (in gateway would have an actual end date in the ILR and outcome code 8)

- And past their planned end date or

- Planned end date is at least one day before the ILR freeze date of the latest return

For example, the freeze date for the R07 return is 5 March 2026. If Bob had a programme aim planned end date of 3 March 2026 and did not have an actual end date they would flag as being included in this calculation.

Looking beyond the framework: the Provider Agreement

A further point strongly emphasised by David during the session was that the AAF should not be read in isolation. He advised providers to review the Accountability Framework specification alongside Schedule 2 of their Provider Agreement.

As he put it:

“You need to look at the two together, because it’s in that Provider Agreement where your contractual obligations are made clearer and where the intervention steps are set out more clearly. It also covers the contextual factors that the department would take into account, even outside of the framework, and explains how a review of performance in apprenticeship units and foundation apprenticeships will have some bearing on how things are going.”

Why governance and data accuracy matter more than ever

As David emphasised during the session, the framework represents minimum expectations, and warns that treating the thresholds as targets leaves little room for contingency.

The more effective approach is to build internal performance measures that sit above the framework’s minimums and to monitor them routinely. That requires strong visibility of ILR data, programme design and learner progress.

Key questions for providers include:

- Are learners progressing in line with planned duration?

- Are there early signs of slippage in specific programmes?

- Is programme design realistic in terms of duration and delivery model?

- How many live learners are approaching their planned end date, and what support is in place?

Context will always matter, particularly where a provider is flagged within the framework. However, the ability to demonstrate clear internal monitoring and proactive action significantly strengthens any discussion.

How Aptem can support stronger AAF governance

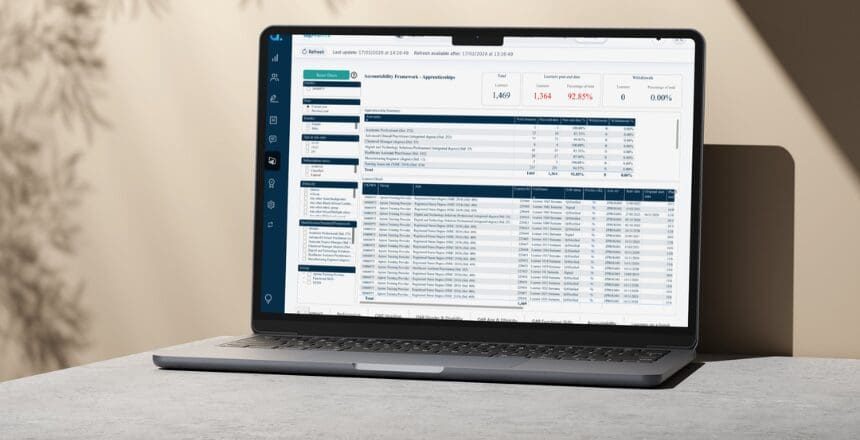

During the webinar, Aptem’s Katie Thornton demonstrated how providers can use Aptem’s built-in tools to actively manage risk, particularly around live learner progress and apprentices nearing or exceeding their planned end date. The focus was clear: give teams real-time visibility, surface risk early, and turn insight into action.

Key features highlighted included:

Real-time dashboards and “requires attention” indicators provide visibility at organisation, tutor and learner level. Teams can see where learners are against their planned duration and identify those nearing or exceeding their end date. Dashboards allow users to review RAG-rated performance, monitor KSB progress and track outstanding actions. Learning plan progress links directly into this wider view, helping tutors see where a learner should be at a specific snapshot in time. In the context of the revised AAF, this supports the requirement to monitor live learners closely and intervene promptly where progress slows.

Structured action planning within reviews was highlighted as powerful functionality that Aptem customers may find hugely valuable. Tutors can create initial action plan reviews and follow them up with progress-based reviews. Actions can be updated as circumstances change and carried forward into future reviews, creating a continuous thread of accountability. This provides a clear, auditable record of agreed actions, completed support and outstanding tasks. Review responses are available in OData, and features such as review summarisation and meeting notes support consistent review planning and evidence capture. This not only strengthens internal quality assurance but also supports inspection readiness by demonstrating structured oversight and follow-through.

Capturing reasons for delay and identifying gaps also plays a critical role.

Tutors can record key reasons for delay and interrogate areas of the learning plan showing little or no progress. This shifts the conversation from the “learner is behind” to “why is the learner behind?”, enabling targeted and evidence-based support. This aligns with the AAF’s emphasis on active monitoring rather than retrospective explanation.

Checkpoint was presented as a practical tool to check learner understanding. This popular tool presents a series of AI-generated questions designed to gauge learner comprehension of the Knowledge, Skills and Behaviours (KSBs) within their Standard. Learners can independently test their understanding of recently covered KSBs and receive useful feedback, without coach involvement. Tutors can create manual checkpoints by selecting specific KSBs and using a mix of theory-based and scenario-based questions. Once completed, tutors can see what the learner got right, what they got wrong and how long it took them. Learners can also use the 24-hour virtual assistant via “get help with this question”. This helps determine whether delays relate to understanding, engagement or support need, and informs focused next steps.

Skills radar and tripartite visibility

This skills scan enables learners, tutors and employers to see distance travelled and identify gaps collaboratively. This supports transparent discussions about progress, curriculum coverage and development, which are central to inspection judgments.

Together, these tools enable providers to move from reactive reporting to proactive performance management. In the context of the revised AAF, that means identifying risk before thresholds are breached and demonstrating structured intervention when learners fall behind.

Looking ahead: strengthening performance oversight

The revised framework reinforces the importance of routine self-assessment and continuous improvement. Providers are expected to review their performance, identify quality issues and take action where needed. The updated indicators, particularly around inspection judgments and live learners past planned end dates, bring greater emphasis to these processes.

A structured management approach that combines accurate ILR data, clear programme design, regular reviews and real-time tracking supports stronger oversight. Rather than reacting to headline metrics, providers can build systems that surface risk early and enable timely support.

If you would like a deeper dive into the changes, the strategic implications and a walkthrough demonstration of how Aptem can support providers to navigate this change, watch the full webinar recording here.

- If you’re already an Aptem customer: Speak to your Customer Success Manager to learn more about our support on reviewing your dashboards, reports or configuration in light of the updated AAF.

- If you’re considering switching to Aptem: contact our team to arrange a tailored demonstration to explore how a joined-up management platform can support stronger oversight of learner progress and AAF performance.